Silver bullet

Model Saving & loading + Model Stacking 본문

Model Saving & loading

from sklearn import datasets, model_selection, metrics, svm

iris = datasets.load_iris()

train_x, test_x, train_y, test_y = model_selection.train_test_split(iris.data, iris.target, test_size=0.3, random_state=0)

model = svm.SVC(C=1.0, gamma='auto')

model.fit(train_x, train_y)

predicted_y = model.predict(test_x)

print(metrics.accuracy_score(predicted_y, test_y))saving the trained model

import joblib

joblib.dump(model, 'model_iris_svm_v1.pkl', compress=True)loading the trained model

import joblib

model_loaded = joblib.load('model_iris_svm_v1.pkl')

print(metrics.accuracy_score(model_loaded.predict(test_x), test_y))

* 전처리 (min-max scaler, standard scaler) 도구를 사용했을 시, 전처리 도구도 같이 pkl 파일로 저장해둬야함.

Model Stacking

Stacking을 위한 패키지 vecstack

아래 명령어들로 xgboost & vecstack를 설치하고 Jupyter notebook 재부팅(Kernel Restart)을 진행해주세요

!pip install xgboost==1.5.2

!pip install vecstack==0.4.0from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

from sklearn.ensemble import ExtraTreesClassifier

from sklearn.ensemble import RandomForestClassifier

from xgboost import XGBClassifier

import warnings

warnings.filterwarnings('ignore')

from vecstack import stacking

# Load demo data

iris = load_iris()

X, y = iris.data, iris.target

# Make train/test split

X_train, X_test, y_train, y_test = train_test_split(X, y,

test_size = 0.2, random_state = 0)

# Initialize 1-st level models.

# Caution! All models and parameter values are just

# demonstrational and shouldn't be considered as recommended.

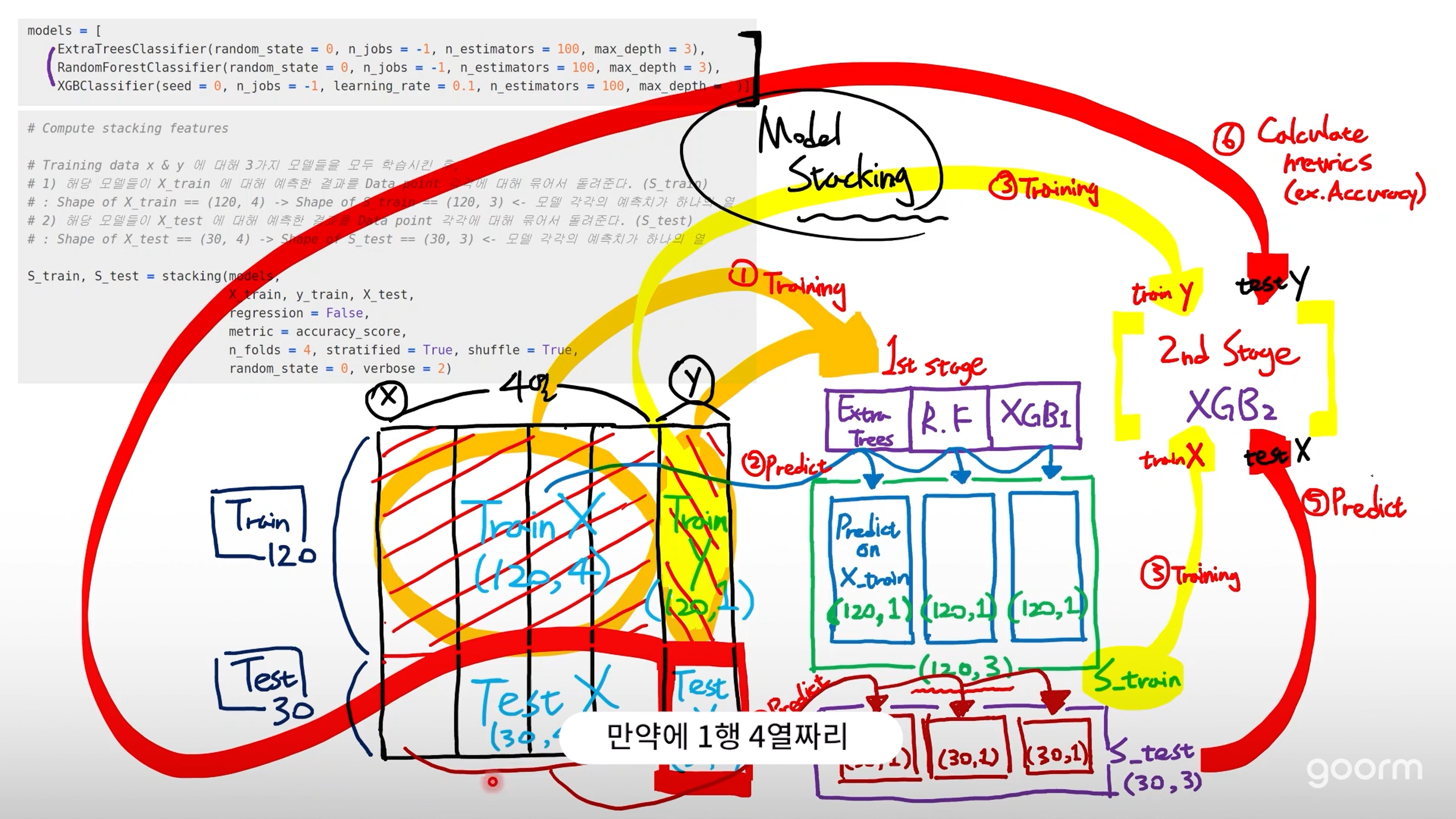

models = [

ExtraTreesClassifier(random_state = 0, n_jobs = -1, n_estimators = 100, max_depth = 3),

RandomForestClassifier(random_state = 0, n_jobs = -1, n_estimators = 100, max_depth = 3),

XGBClassifier(seed = 0, n_jobs = -1, learning_rate = 0.1, n_estimators = 100, max_depth = 3)]

# Compute stacking features

# Training data x & y 에 대해 3가지 모델들을 모두 학습시킨 후,

# 1) 해당 모델들이 X_train 에 대해 예측한 결과를 Data point 각각에 대해 묶어서 돌려준다. (S_train)

# : Shape of X_train == (120, 4) -> Shape of S_train == (120, 3) <- 모델 각각의 예측치가 하나의 열

# 2) 해당 모델들이 X_test 에 대해 예측한 결과를 Data point 각각에 대해 묶어서 돌려준다. (S_test)

# : Shape of X_test == (30, 4) -> Shape of S_test == (30, 3) <- 모델 각각의 예측치가 하나의 열

S_train, S_test = stacking(models,

X_train, y_train, X_test,

regression = False,

metric = accuracy_score,

n_folds = 4, stratified = True, shuffle = True,

random_state = 0, verbose = 2)# Initialize 2-nd level model / 여기서 하이퍼 파라미터 튜닝하면 성능 향상 기대 가능

model = XGBClassifier(seed = 0, n_jobs = -1, learning_rate = 0.1, n_estimators = 100, max_depth = 3, eval_metric='mlogloss')

# Fit 2-nd level model

# 3개의 모델이 예측한 결과인 S_train을 Feature(3개의 열)로 하여 y_train을 맞추도록 모델을 학습시킨다.

model = model.fit(S_train, y_train)

# Predict

# 앞서 3개의 모델이 예측한 결과인 S_test를 Feature로 하여 y_test를 예측한다.

y_pred = model.predict(S_test)

# Final prediction score

print('Final prediction score: [%.8f]' % accuracy_score(y_test, y_pred))Final prediction score: [0.96666667]Usage. Scikit-learn API (권장)

from vecstack import StackingTransformer

# Get your data

iris = load_iris()

X, y = iris.data, iris.target

X_train, X_test, y_train, y_test = train_test_split(X, y,

test_size = 0.2, random_state = 0)

# Initialize 1st level estimators

estimators = [

('ExtraTrees', ExtraTreesClassifier(random_state = 0, n_jobs = -1, n_estimators = 100, max_depth = 3)),

('RandomForest', RandomForestClassifier(random_state = 0, n_jobs = -1, n_estimators = 100, max_depth = 3)),

('XGB', XGBClassifier(seed = 0, n_jobs = -1, learning_rate = 0.1, n_estimators = 100, max_depth = 3, eval_metric='mlogloss'))]

# Initialize StackingTransformer

stack = StackingTransformer(estimators,

regression = False,

metric = accuracy_score,

n_folds = 4, stratified = True, shuffle = True,

random_state = 0, verbose = 2)

# Fit

stack = stack.fit(X_train, y_train)

# Get your stacked features

S_train = stack.transform(X_train)

S_test = stack.transform(X_test)

# Use 2nd level estimator with stacked features

model = XGBClassifier(seed = 0, n_jobs = -1, learning_rate = 0.1, n_estimators = 100, max_depth = 3, eval_metric='mlogloss')

model = model.fit(S_train, y_train)

y_pred = model.predict(S_test)

print('Final prediction score: [%.8f]' % accuracy_score(y_test, y_pred))Final prediction score: [0.96666667]'AI > AI' 카테고리의 다른 글

| Stratified K-Fold CV & cross_val_score (0) | 2024.07.12 |

|---|---|

| Pipeline for StandardScaler & OneHotEncoder (0) | 2024.07.12 |

| IQR 기반 Outlier 탐지 및 제거 & SMOTE Over-sampling (0) | 2024.07.12 |

| Dimensionality Reduction & PCA (0) | 2024.07.11 |

| Clustering & K-means Algorithm / 클러스터 수 결정 기법 - Elbow method & Silhouette score (0) | 2024.07.11 |